- Top Results

- Bosch Sensortec Community

- Discuss

- MEMS sensors forum

- BHI260AP Sensor Trigger Chaining

BHI260AP Sensor Trigger Chaining

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-17-2021 12:27 AM

Good evening, I am trying to better understand the use case for sensor trigger chaining. In the programmer's guide I see a high level example where a physical sensor may trigger one or more virtual sensors. I understand the singular relationship between a physical sensor and a number of virtual sensors that may do additional value added processing/refinement.

1) Is there a use case (or capability) where by a single physical sensor would trigger a sequence of multiple virtual sensors? Is each virtual sensor chained to a previous virtual sensor?

2) Is there a capability for a virtual sensor to depend on data aggregtated from multiple physical sensors in order to draw a conclusion and report to the host?

Thank you.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-23-2021 09:42 AM

Hi not_karl,

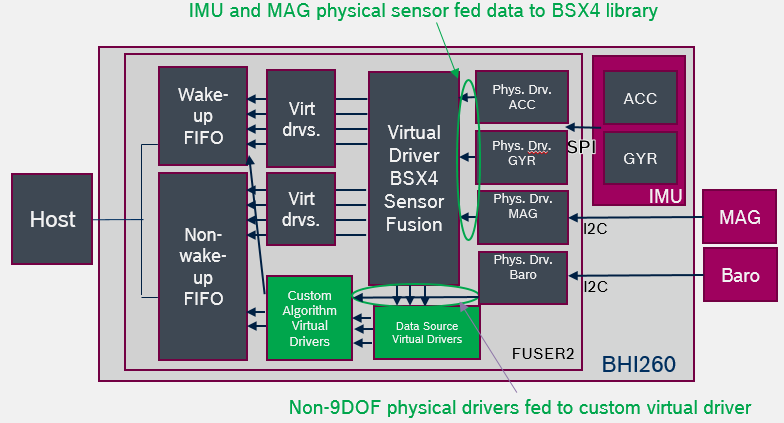

Before answering the question, first have to classify if the designed customer's virtual sensor is a BSX related sensor (of which the physical source is ACC, GYR or MAG). If the virtual sensor is a BSX related sensor, we should not use the physcial source directly as trigger source, but through BSX library and use BSX custom virtual sensor as our sensor source. The custom BSX virtual sensor is designed as source for customer's sensors implemented in the SDK.

If the customer's sensor is not using ACC, GYR, or MAG as data source, then the trigger source can be the physical source, such as a virtual Barometer sensor with trigger source from a physical pressure sensor.

To answer your question, besides the obvious case of one virtual sensor triggered by another sensor, there are 2 cases here might be of your interest as you mentioned:

Case1. Multiple virtual sensors triggered by one same sensor.

Case2. Single virtual sensor depending on multiple sensor as source.

The first case is vey common, for example, the ACC source can be used as trigger source for both step counter and motion detector. (keep note for the virtual sensor design in this example, we should use the custom BSX ACC as source.) So when designing the virtual sensor descriptor, under structure variable triggerSource.value, put the sensor type of the source. We may have the same sensor type of the source for the other virtual sensor. The triggering sequence is based on the value virtual sensor's priority, which is also under virtual sensor descriptor's structure variable.

The second case, so the designed virtual sensor is a fusion sensor, and very possible an algorithm would be required inside the designed virtual sensor, as it is based on multiple data sources. The tricky part for this case, is that the timestamp should match from the multiple sensor sources that feed into the designed virtual sensor algorithm. During implementation, the multiple sensors data should all be handled in the same function to store all data and match the timestamp. When all sensor data source is ready, this function shall trigger the designed virtual sensor, and proceed the algorithm handling at the designed virtual sensor if any.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-10-2021 08:42 PM

Thank you, this is helpful. I have a tangible use case where I would like to use the combined data from the accelerometer with readings from an ambient light sensor in order to have a virtual sensor provide a derived data stream.

I would like to have the samples from both physical sensor available to the virtual sensor in order to derive a new outcome and report it to the end user. Without sharing variables between the drivers, is it possible to accomplish this?

From a triggering perspective, my preference would be to trigger this on the accelerometer (recognizing a tap activity) and allow the virtual sensor to combine this with data from the ambient light sensor.

Trying to better understand how to accomplish this within the architecture of the BHI260.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-11-2021 09:56 AM

Hi not_karl,

For the case of utilizing accelerometer and light sensor data, besides the BSX_custom_accelerometer_corrected virtual sensor (from BSX4 algorithm), and the light virtual sensor (from your own coding), you will need a third virtual sensor maybe called TapActivity virtual sensor. You need to direct the trigger source of theTapActivity virtual sensor from the first two virtual sensor.

In the TapActivity virtual sensor, you should create a buffer to store the data and timestamp of the two virtual sensor values. The timestamp is to make sure the data from various virtual sensor source matches. You may do your tap activity algorithm in the virtual sensor data handle, and report the algorithm output solution to the host.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-11-2021 06:30 PM

Thanks, this poses a number of questions for me.

1) In the VirtualTapActivity virtual sensor do I have two triggerSource clauses or indicate multiple virtual sensors within the same clause?

2) Do I need to specify both physicalSensors as well in the descriptor?

3) In order to determine which sensor's data I am dealing with at the time of trigger, I assume I am looking at VirtualSensorDescriptor.triggerSource?

4) If I understand correctly, I need to use the timestamp from the two trigger sources in order to record and compose the data when there has been an event from both sensors in the same meaningful time window?

Thank you.

Still looking for something?

- Top Results